Microsoft and the R(evolution)

Over the last few years Microsoft have been building infrastructure to support the R language within a number of tools, technologies and platforms.

Microsoft R Open

Initially distributed by Revolution as Revolution R Open and after acquisition in 2015 the free to own and distribute version of R was rebranded Microsoft R Open. The current release is based on R-3.3.3 (with new distributions coming out a month following the CRAN version update).

In addition to the total compatibility with the existing R-3.3.3 and third party packages the distribution supports multi-threaded math libraries and checkpoint packages. These packages allow the data scientist to specify the exact version of the libraries that should be used leading to more reproducible results by retrieving the exact versions of the packages you need. The aim of Microsoft is to encourage the R community to develop and release stable sets of packages that have been tested together. This will prevent many of the issues found when including new packages into a project leading to potential version conflicts for the same dependent libraries.

To enable the checkpoint feature simply load the library(checkpoint) and set the checkpoint date.

Personally, I find this feature useful for larger projects where some packages have updated to new versions of R and its dependencies where other packages are (sometimes) several versions behind. The checkpoint allows me to try new releases of packages with the knowledge that I can roll back to a consistent state if I discover any issues. Checkpoints also help those learning R who often find example scripts no longer work due to package changes.

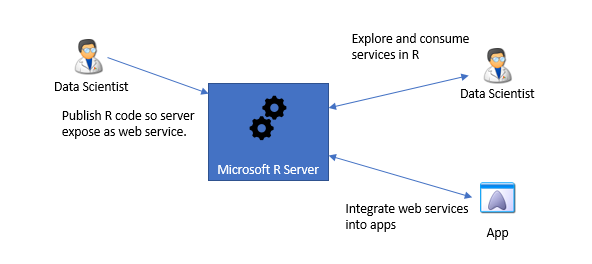

R Server

R Server is an enterprise class server for hosting and managing parallel and distributed workloads of R processes, R Server can run on both Windows and Linux and supports both Hadoop and Apache Spark clusters. The R Server uses Microsoft R Open as the R runtime and contains the following features,

Operational Analytics

Often big data projects hit issues when we need to operationalize the R scripts, packages and models developed by the data scientist. This can lead to months of work and rework to get the models deployed and utilized by the business, none of which facilitates the agile nature of today’s business. R Server helps with this operationalization by allowing R analytics to be exposed as a web service and generating swagger based REST API’s with one line of code, therefore allowing the service to be easily consumed by client languages.

This platform allows the development of models on premise while final scoring of the model is run in the cloud, the integration with LDAP (or Azure AD) and HTTPS with SSL/TLS1.2 allows this to be done in a secure fashion.

ScaleR

ScaleR is a proprietary package in R Open and R Server which is used for handling large data sets. One of the issues with R and something data scientists struggle with is that R holds all its data within memory, even on a large machine this can be problematic when R starts to exceed the memory or processing capabilities of the server. The rx prefix is used to denote a ScaleR function, for example the following code reads a 60,000 file into three blocks of 20,000 rows each.

inFile <- file.path(rxGetOption("sampleDataDir"), "AirlineDemoSmall.csv")

airData <- rxImport(inData=inFile, outFile = "airExample.xdf",

stringsAsFactors = TRUE, missingValueString = "M", rowsPerRead = 200000)

The use of the airExample.xdf parameter writes an xdf file which can be referenced like a data frame but reduces the memory required as all 60,000 rows are no longer held in memory.

On R Server ScaleR also allows chunking of the data, chunking allows the apportion of data into multiple parts for processing and reassembling of the data for later analysis.

Note the R Client still requires that the data fit into memory, however given the R client and Visual Studio R Tools allow me to connect to a remote server it is a simple process to iterate quickly with a small set of data locally on the client, then push the script to a R Server running on a large VM to process the full data set.

Machine Learning Algorithms

MicrosoftML provides a set of machine Learning algorithms for the R Server. MicrosoftML contains the following algorithms

| Algorithm | ML task Supported |

|---|---|

| rxFastLinear() | Binary, classification, linear regression |

| rxOneClassSvm() | Anomaly detection |

| rxFastTree() | Binary classification, regression |

| rxFastForest() | Binary classification, regression |

| rxNeuralNet() | Binary and multiclass classification, regression |

| rxLogicalREgression() | Binary and multiclass classification |

In addition to the algorithms the rxEnsemble() trains a number of models of various types to obtain better predictive performance than could be obtained with a single model.

SQL Server 2016

In SQL Server 2016 Microsoft introduced the SQL Server R Services that integrated the R language with the SQL Server database engine. The R Services run in the database and integrate R in the SQL language allowing the R scripts to run close to the data and prevent the need for data replication and the associated cost and security issues.

In SQL Server 2017 Microsoft is planning to add support for scripts running in Python in addition to R.

HDInsight

As part of Azure Microsoft provide a R Server for HDInsight, this provides the same R Server features running in the Azure cloud.

R Tools for Visual Studio

For the longest time if you “bing’d” Visual Studio and R the top post was from a question on StackOverflow where a user asked if you could use Visual Studio to run R.

Many of the answers scoffed at the very idea that MS would do such a thing. However “never say never” as this week MS release R Tools for Visual Studio, which integrates R into the Visual Studio IDE (RTVS).

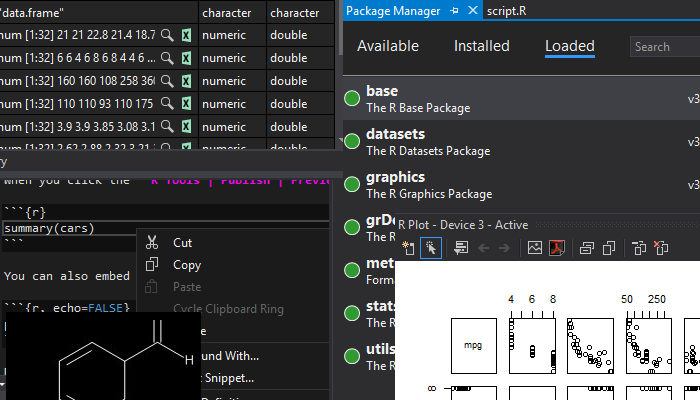

RTVS brings the full power of the Visual Studio to the R language, including syntax highlighting, intelisense, interactive code execution, code navigation, automatic comments formatting and extensive debugging features.

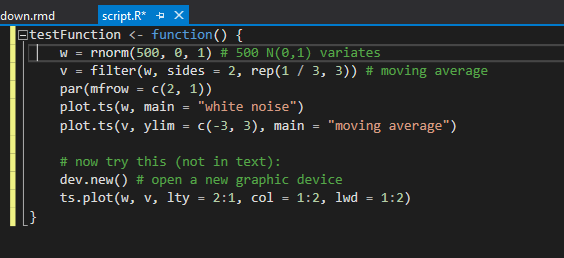

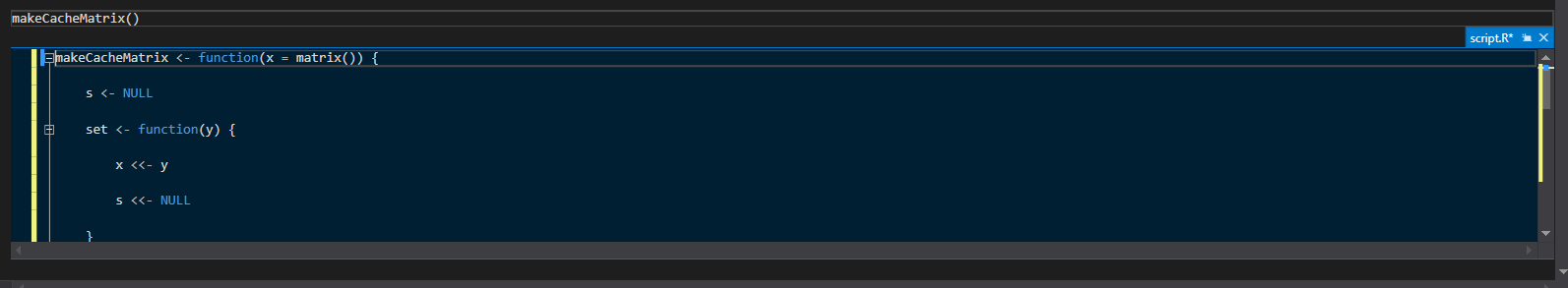

Syntax Highlighting

Visual Studio has been extended to provide syntax coloring of code and documentation, clickable hyperlinks in command and embedded references.

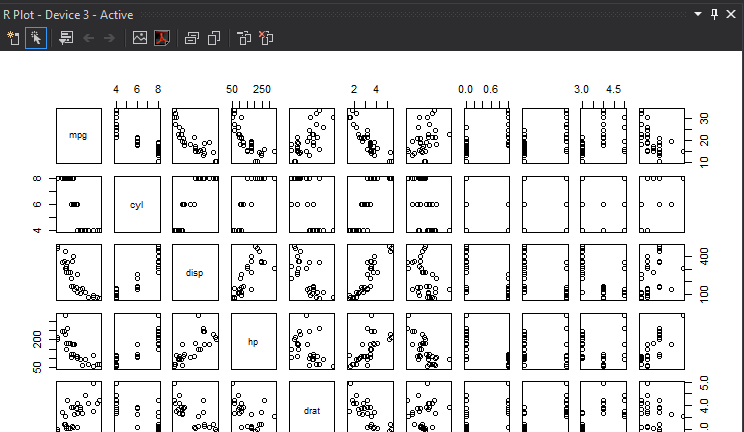

RTVS also supports the full set of plot libraries, for example the plot(mtcars) produces the following visualization. Note that the plot windows can be docked not only within the Visual Studio IDE but also to a separate monitor. This ability to dock a window outside of the IDE is useful when developing plots.

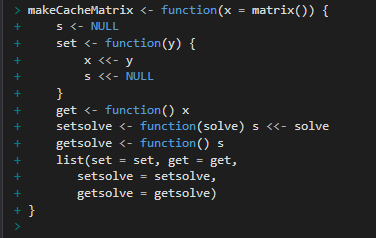

Interactive Code Execution

When developing R code its often useful to execute code immediately without running the whole script, rather than cut and paste the code to the execution window the user can select the code press CTRL+ENTER to send the selected code to the execution window.

Code Navigation

One of the strengths of Visual Studio is the code navigation shortcuts, pressing F12 will take you to the function definition or the developer can use the mini editor to peek at the code within the function without the need to navigate to the method.

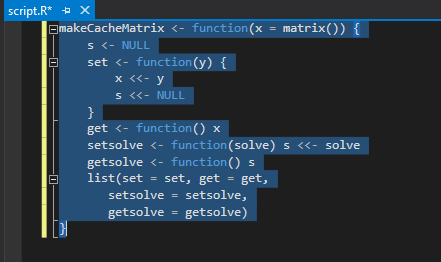

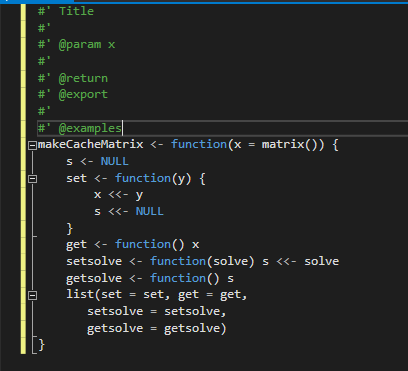

Automatic Comments and Formatting

Typing ### above a function will auto-generate a Roxygen comment for the function.

Debugging

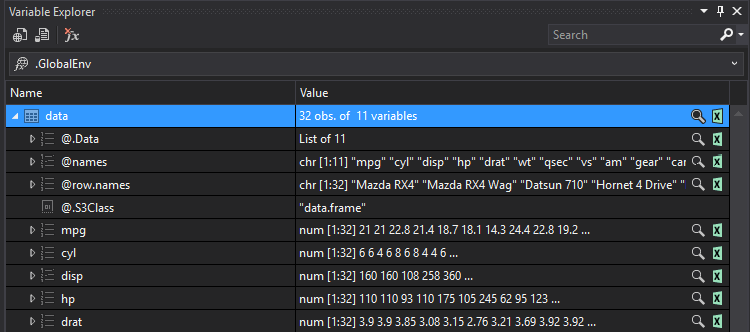

In addition to supporting breakpoints and watch windows RTVS supports data tips which allows the user to hover over a variable and inspect the properties of the variable.

RTVS also provides a table view to visualize data frames which can then be exported to excel. Given the issues with Excel interpreting value types (and getting it wrong) I would personally stay clear of any feature that puts my data into Excel.

RMarkdown

One of my major complaints with the early access and preview releases of RTVS was the lack of support for Markdown. This has now been resolved with full support for RMarkdown include export to HTML, PDF and Word. To view the markdown in Html you will need to install the “pandoc” tools, to view the markdown in PDF you will need to install LaTeX.

The 1.0 release also includes support for creating and running Shiny apps, however the release is missing templates to create the app.R or server.R from the File>New Item menu.

Remote code execution

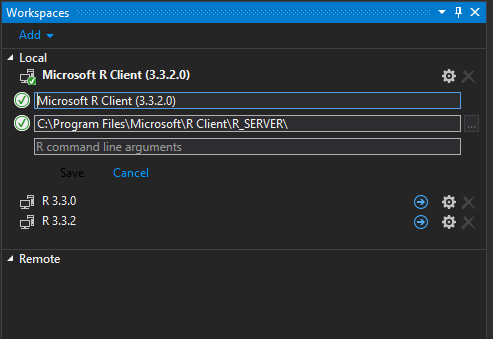

In addition to running the code (in either R Open or CRN R) on the machine where RTVS is installed you can choose to execute the code on an R Server (either local, remote including Azure remote machines). When configured local and remote R environments appear in the Workspace window, switching between these workspaces is as simple as selecting the required R version or server.

Workspaces allow the creation of scripts that can be debugged locally and then pushed to the server for integration and performance testing.

Wrap Up

As with any version 1 product there are some defects and missing features.

The current release is not compatible with the (also newly released) VS2017. The Data Science “workload” was removed from VS2017 just before release so hopefully it will re-appear very soon.

The 1.0 release is missing tools and templates to generate packages, given the need for the R community to embrace reuse as a way to achieve reproducible results, not having the tools to generate packages is a significant issue. In addition given that Visual Studio has a great C/C++ development environment integrating this so developers could step though R and the lower lever C/C++ code would be a significant improvement over R Studio where the development experience of developing packages containing R and C/C++ is not great.

Update the Data Scientist “workload” has reappeared in Visual Studio 2017 Update 2 which is now released.

Update MS have released R Open 3.4