The Age of the Connected Scientist.

Paper, Paper everywhere as far as the eye can see.

When I first started working in a lab fresh out of university everything was recorded in paper notebooks (OK I might be dating myself here). At the end of each day we wrote up the days experiment, pasted in printouts from balances, signed across the printout and the page (to ensure that the printout was not tampered with) and signed the bottom of each page. At the end of the week our notebooks were collected and reviewed by the manager who then countersigned each page. In our small lab to free up storage completed notebooks were shipped to offsite storage, retrieving a notebook took 3 weeks and often it was quicker to re-run a test that wait for the notebook to be returned.

When I moved into manufacturing from research I started to work with paper Standard Operating Procedures (SOP’s), we followed the procedure as defined in the document, we entered the values (sometimes sticking in equipment printouts) and signing each value and page. Then the SOP was sent to the QA department who reviewed and countersigned the SOP and provided us with the “fit for use” stickers for the batch of material we had created. We had simple electronic file shares for some of the data but finding that information proved very time consuming.

These processes generated a sizable amount of paper but little in the way of reusable data.

Enter the ELN

Roll forward a couple of years and we start to see LIMS (Laboratory Information Systems) replace some of the of the paper, ELN’s (Electronic Laboratory Notebooks) then either replace or are used in conjunction with LIMS systems. Much of this software is implemented as “paper on glass” where the system capabilities are minimal and mainly used as a general solution for intellectual property protection. Many software vendors and customers would argue that these systems are also being used for knowledge management, however while most of these system excel at manual data entry they do very little in terms of turning that data into knowledge that can be used to drive new work.

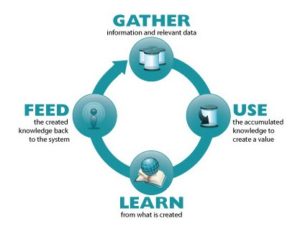

To fix the data to knowledge gap companies turned to a data warehouse approach to transform the data into a more digestible form that can be searched. However the warehouse structure were often inflexible and only tuned to the questions that needed answering when the warehouse was generated. To reduce the turnaround time in converting data to knowledge we need systems that allow new linkages to be discovered as data is gathered and recorded, and the information stored in a manner that allows multiple extractions, reclassifications and processing without significant IT overhead.

ELN’s also failed to solve a significant issue with the way the ELN injected itself into the scientists day to day activity as many scientists continued to use the ELN as they would have a paper notebook, recording results on scraps of paper in the lab and then transferring these into the ELN at the end of the day. In the Quality lab the implementation of electronic signatures prevents this however in many cases the scientist still enters the data by hand before the record was signed. Where the ELN has a native capability to capture information directly from instruments these features are often cumbersome; sometimes requiring the scientist to click a table cell to move the cursor or press multiple buttons to transfer the data.

Many software solutions have ignored the benefits that a ELN could offer, rather than freeing the scientist from the work of transcribing information into an electronic document they simply replaced pen and paper with a computer and mouse.

The “Connected Scientist”

As software developers that provide solutions to the scientific community we need to rethink the way that we connect the scientist to the lab, each other and data. Providing scientists with a mobile device is a start however deploying the same desktop applications onto a tablet device without changing the interaction model continues to propagate the same issues into a new form factor.

Connected Scientist and the Lab

Many laboratory devices can connect to the network, however if we still require the scientist to interact with tablet to get the data from the device into their ELN then we continue to put barriers between the scientist and their work.

In an ideal world the scientist would approach the balance and have the balance recognize who she is, read the barcode of the material needing to be weighed, capture the weight and populate the ELN with the recorded value. All this should happen with the same steps as if the scientist was just weighing the material, in addition to not interrupting the scientists work these steps need to happen in a manner that conforms to GMP/GXP.

A lofty goal perhaps but facial recognition technologies (as found in “Windows Hello”) and Kinect/HoloLens could be combined to recognize the user, samples and equipment, the latter via barcodes. Once we have the verified the user and identified the samples and equipment, the networked equipment could upload the data processing services. Many pieces of laboratory equipment are not connected to the network, however low cost IoT (Internet of Things) technologies (Raspberry Pi and Adruino boards) could be used as a conjugate between the device and the network, these devices could also include software that handles securely associating the information from the instrument to the sample and ELN document without the need for the purchase of new devices. IoT devices could also be used to monitor equipment and give real-time status updates on the run, notify users when runs have finished and their results are available, or the next scientist in the queue to use the machine that it is available or flag when the equipment has encountered and error.

In the analytical lab as we start to record the time a process takes we can better plan the throughput of samples for testing. In addition we can model how adding people or equipment could increase the capacity of the lab. Today much of this information is stored in spreadsheets and is opaque company.

In the laboratory Virtual Reality is not going to be a big player, there are too many safety issues with a headset that obscures 100% of your vision. However Augmented Reality could play a part, where holograms could be used to display information about the state or the equipment, the length of time left on an equipment run or allowing the scientist to see the next step of the recipe without needing to pick up a tablet and scroll to find the right step in the process. Although Microsoft’s HoloLens won’t be mature for a couple more years as people gain familiarity with the technology I believe that we will start to see practical applications for this technology in the lab.

Connected Scientist and Collaboration

Today scientists collaborate with colleagues in the same lab, across departments with their organization and with external teams. Many times the scientist needs to request collaborators to carry out work on their behalf. The scientist then needs to track the work and communicate with the people doing the work. Much of this communication is handled via email with work being scheduled via excel spreadsheets, even when companies have implemented shared storage (Google docs or SharePoint) these technologies simply replace the document in the email with a link to a document on a shared server.

The Cloud is going to play a significant part in facilitating collaboration, as more and more of the Cloud vendors gain certification with standards bodies companies are looking to shift many of their collaboration platforms to the Cloud. However simply using the Cloud as a file share ignores the process power available within the Cloud to provide a true secure collaboration platform.

As software vendors we need to provide collaboration tools directly within the software that the scientist is using on a daily basis. Allowing project teams to be spun up, the team to collaborate on shared documents or experiments and then the project to be wound up would provide a collaboration forum for scientists within the organization, academia and CRO’s to collaborate.

In the collaboration space mobile devices again play a part, for example when a test has been completed by a CRO and the results are available I would like to receive a text on my phone. However on review of the data I have some questions, I want to tag the data and send a notification to the CRO all within the application. With shared electronic whiteboards; real time virtual conferences; and collaboration build directly into applications we start to expose the limitation of email as a collaboration tool.

Connected Scientist and Data

We need to move the scientist closer to the data to allow the scientist to make more informed decisions. To do this we need to provide the scientist the data within the context of her current task. Many of today’s solutions feed data to the scientist via a report, dashboard, or require the scientist to switch between multiple application and create complex searches in order to retrieve and visualize the data; these solutions provide little benefit to the end user. In addition to providing the information we need to provide the scientist to drill down to the original data (provided they have the correct permissions). As data is being collected we want the data to tagged, categorized and associated with the samples and instruments such that the data can be mined at a later date.

Many companies are looking to store the original data in a data lake from which information can be extracted, pivoted and associated with additional data and provided to the scientist. The original data can then be re-extracted, re-pivoted and recast at a later date to provide new insights.

As the amount of reusable information is increased we can start to use machine learning to inform the decisions that the scientists make and run predictive models without the need for the scientist to run the physical experimentation.

Digital Lab

The movement from paper based of electronic notebooks was a significant change within the scientific community, however the ELN is a starting point of the journey and not the destination. Many industries have already started to reap the rewards from new technologies to capture, extract and socialize large amounts of data, however software vendors need to start to bring these capabilities into their products in order to truly unlock the knowledge for the scientist.

3 thoughts on “The Age of the Connected Scientist.”

Thanks for the excellent post

This is really helpful, thanks.

Thank you for the wonderful post